Testing Becomes a Design Constraint at Scale

In early-stage systems, testing is primarily a development concern. Code changes are localized, deployments are infrequent, and failures are usually attributable to logic errors. Unit testing provides sufficient coverage in this phase by validating behavior within clearly defined boundaries.

As systems scale, testing shifts from a development activity to a design constraint. Failures increasingly occur at integration points rather than within individual components. Testing approaches that worked well early on begin to leave gaps, not because they are flawed, but because the system has outgrown them. This shift is common in larger custom software development engagements where architecture evolves faster than testing practices.

The Role of Unit Testing in Larger Codebases

Unit tests validate deterministic behavior. They confirm that functions, methods, and modules behave as expected given controlled inputs. Their value lies in speed, isolation, and precision of failure signals.

In larger codebases, unit testing is most effective when applied to:

- Business rules and domain logic

- Data transformations

- Validation and decision paths

- Areas undergoing active refactoring

Unit tests are also a practical entry point for legacy systems. They allow teams to document existing behavior before making structural changes, which is often a prerequisite for modernizing legacy applications.

What unit tests do not provide is assurance that components interact correctly once assembled. They are not designed to surface issues related to configuration, contracts, or runtime environments.

Why Integration Testing Becomes More Important Over Time

Integration testing exists to validate behavior across boundaries.

As architectures become more modular or distributed, correctness depends less on individual components and more on how those components coordinate. Database interactions, service communication, message handling, and external dependencies introduce failure modes that isolated tests cannot detect.

Integration tests address this by exercising real interactions under controlled conditions. This introduces additional complexity. Execution is slower. Debugging is less direct. Environments require greater stability.

Despite these costs, integration testing becomes increasingly relevant as systems grow. Many high-impact failures in mature systems stem from incorrect assumptions between components rather than incorrect logic within them. Aligning integration coverage with CI/CD pipeline design is often necessary to prevent reliability from degrading as release velocity increases.

A Common Misalignment in Testing Strategy

Teams often treat unit testing and integration testing as interchangeable approaches, adjusting emphasis based on convenience rather than risk.

This leads to predictable outcomes. Heavy unit coverage without sufficient integration validation produces systems that behave correctly in isolation but fail under real conditions. Excessive reliance on integration testing slows feedback loops and reduces developer trust in test results.

The underlying issue is not test quantity, but alignment. Each testing approach addresses a different category of risk. Treating one as a substitute for the other leaves important failure surfaces untested.

Architectural Context Matters

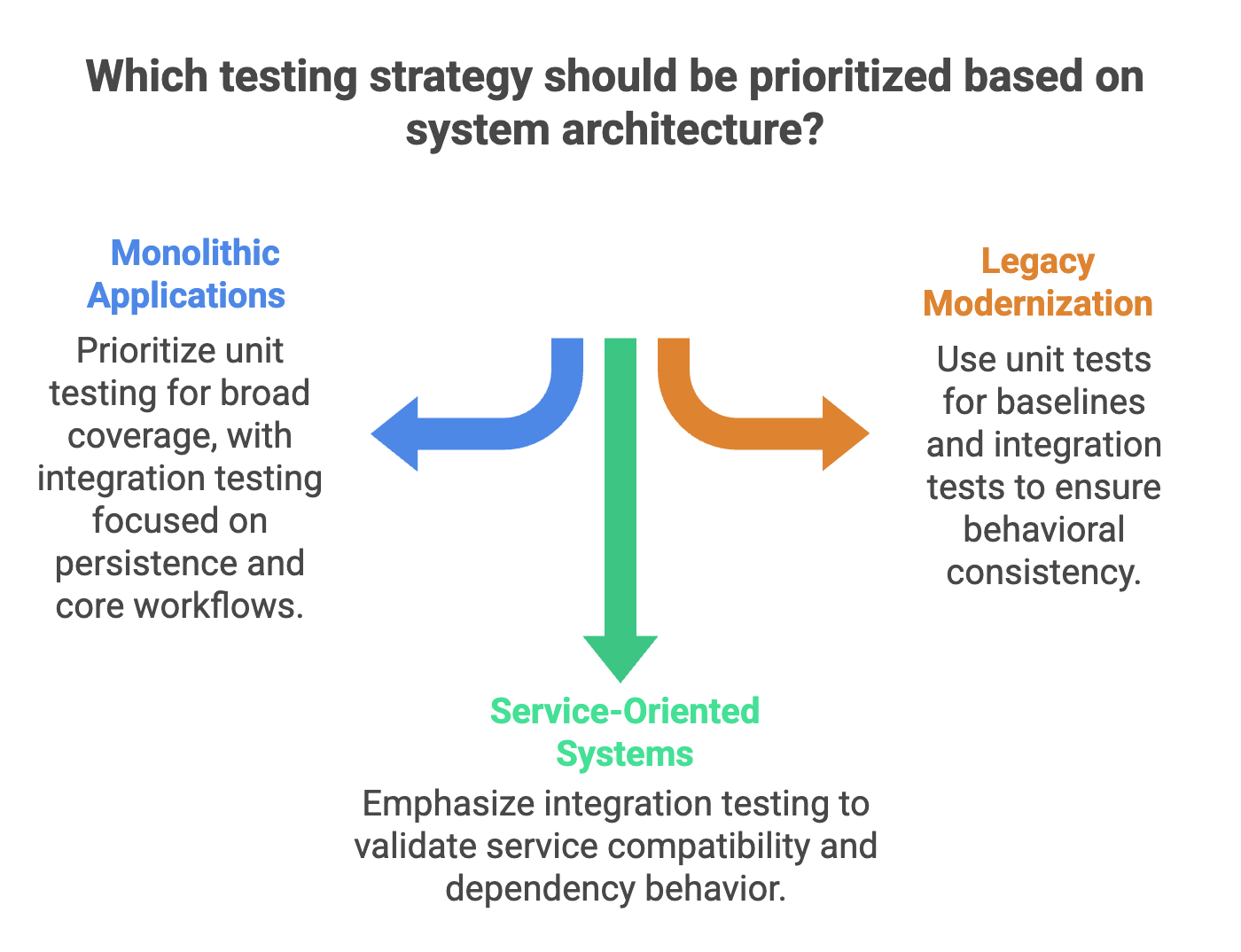

The appropriate balance between unit and integration testing depends heavily on system architecture.

In monolithic applications, unit testing often provides the majority of coverage, with integration testing focused on persistence and core workflows.

In service-oriented or distributed systems, integration points become primary risk areas. Contract validation, service compatibility, and dependency behavior warrant greater testing attention. These considerations are typically part of broader scalable software architecture planning initiatives rather than isolated QA decisions.

Legacy modernization introduces additional constraints. Unit tests help establish behavioral baselines, while integration tests help ensure that externally visible behavior remains consistent as systems are restructured.

Testing strategy that does not account for architectural context tends to age poorly.

👋 What are you looking to improve in your current testing or architecture?

Share a brief overview of your system and where testing or reliability is becoming a concern.

Trusted by tech leaders at:

An Example of Risk Concentration

Consider a billing subsystem within a SaaS platform.

Pricing logic and discount calculations benefit from unit testing due to their deterministic nature and frequency of change. The more consequential risks, however, often appear elsewhere: payment processing, retries, partial failures, and persistence ordering.

These risks span multiple components and cannot be fully validated through isolated tests. Integration testing exists to surface these issues before they reach production.

Focusing testing effort solely on one layer leaves exposure in the other.

Testing as Risk Management

In scalable architectures, testing is not an exercise in completeness. It is a mechanism for managing risk.

Unit testing provides confidence in internal correctness. Integration testing provides confidence in system behavior. As systems grow, both forms of confidence are necessary, though rarely in equal proportion.

When testing strategy evolves alongside architecture, it reinforces system stability. When it does not, it introduces fragility despite increased test volume.

If you’re evaluating how your current testing approach aligns with your system design or planning changes tied to growth, contact Curotec to review your architecture and identify where targeted improvements can reduce operational risk.